While ChatGPT presents itself as a revolutionary tool for communication and creativity, lurking beneath its refined exterior lies a shadowy underbelly. Its unfettered access to information can be abused to spread misinformation and perpetrate harmful biases. Developers, careless, have failed to adequately address these shortcomings, leaving users vulnerable to the harmful consequences of its unforeseen potential.

- The engine can create convincing lies, making it a potent weapon for disinformation campaigns.

- Moral concerns surrounding bias and accountability remain largely unaddressed, raising critical questions about its long-term impact.

- The dissemination of ChatGPT could lead to a flood in generated content, eroding the genuineness of human expression.

The Perils of ChatGPT: A Critical Look at Its Flaws

ChatGPT, the versatile AI chatbot, has captivated the world with its ability to produce human-like text. Yet, beneath this polished exterior lie various potential perils that warrant keen scrutiny. While ChatGPT excels at mimicking linguistic patterns, it often struggles to grasp the subtleties of human communication. This can lead to flawed outputs that misrepresent information, potentially circulating harmful misconceptions.

Moreover, ChatGPT's reliance on immense datasets for training raises concerns about fairness. The data it has learned from may contain preexisting societal biases that are reinforced in its outputs, perpetuating harmful stereotypes and unfair practices.

Finally, the unrestricted nature of ChatGPT's capabilities poses a significant risk for abusive applications. It can be exploited to produce fraudulent content, spread disinformation, or even intimidate individuals. Addressing these perils requires a multi-faceted approach that includes establishing robust safeguards, promoting accountability in AI development, and fostering ethical engagement with this powerful technology.

ChatGPT's Hidden Risks: A Look at Potential Harms

While ChatGPT presents exciting possibilities, it also casts a cloud of concern. Its unprecedented capabilities raise ethical dilemmas, and the potential for misuse is undeniable. From spreading misinformation, to manipulating individuals, ChatGPT's unintended consequences demand careful scrutiny.

- Data breaches

- Social manipulation

- Bias and discrimination

Addressing these risks requires a multi-faceted strategy. Regulators, developers, and users must work together to address the potential for harm and ensure that ChatGPT is used responsibly. This joint action is crucial to harnessing the benefits of AI while safeguarding against its negative consequences.

Community Complaints: Revealing ChatGPT's Shortcomings

ChatGPT, the groundbreaking AI chatbot developed by OpenAI, has recently been faced a wave of criticisms. While initially celebrated for its sophisticated language synthesis, users are highlighting several key weaknesses.

This backlash center around issues such as errors related to factual responses, a predisposition for creating plausible but false information, and limitations in subtle requests.

- This also includes

- examples of

- the AI's generated text

As a result, the community complaints are prompting a debate about the responsible use of large language models like ChatGPT.

Is ChatGPT Too Dangerous? The Growing Concern Over AI Bias

As the capabilities of large language models like ChatGPT continue to flourish, concerns are mounting about the potential for danger stemming from inherent flaws within these systems. Critics argue that AI, trained on vast datasets of human-generated text, inevitably incorporates societal biases, which can amplify existing inequalities and favor certain groups. This raises pressing questions about the ethical implications of deploying AI in crucial domains such as law enforcement.

- Mitigating these biases requires a multifaceted approach that includes carefully curated datasets, algorithmic transparency, and ongoing monitoring | diverse development teams, rigorous testing protocols, and public accountability | investments in fairness research, ethical guidelines for AI development, and inclusive design principles

The potential consequences of unchecked AI bias are significant, with the risk of worsening societal problems and eroding trust in technology. As we navigate this uncharted territory, it is imperative to prioritize ethical considerations and aim for AI systems that are both capable and fair.

ChatGPT and the Future: Navigating the Ethical Dilemmas

ChatGPT, a potent new AI technology, presents both opportunities and profound ethical challenges. As we embark deeper this uncharted territory, it's crucial to address these problems with chatgpt negative impact care. One fundamental challenge is the likelihood of bias, as ChatGPT's ability to produce convincing text can be abused.

- Furthermore, there are issues about accountability in AI decision-making. It is important to understand how ChatGPT determines its results, and who are ultimately for the AI's actions.

- Additionally, the effects of ChatGPT on human interaction needs careful analysis. As AI evolves more commonplace in our lives, it is crucial to maintain the importance of real human relationships.

Navigating these ethical dilemmas will require a comprehensive approach, involving cooperation between developers, ethicists, and the general public. Only through transparent discussion and a collective commitment to responsible development can we safeguard that ChatGPT and other AI technologies are used for the progress of humanity.

Alana "Honey Boo Boo" Thompson Then & Now!

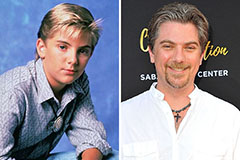

Alana "Honey Boo Boo" Thompson Then & Now! Jeremy Miller Then & Now!

Jeremy Miller Then & Now! Michael Fishman Then & Now!

Michael Fishman Then & Now! Justine Bateman Then & Now!

Justine Bateman Then & Now! Sarah Michelle Gellar Then & Now!

Sarah Michelle Gellar Then & Now!